AI Governance Foundations Every Organization Should Have Before Rolling Out AI

Organizations are feeling pressure to adopt AI, whether it’s coming from the board, competitors, or employees who are already taking matters into their own hands. IT directors, CTOs, and other leaders know the technology can boost productivity, but most are also aware of the potential risks.

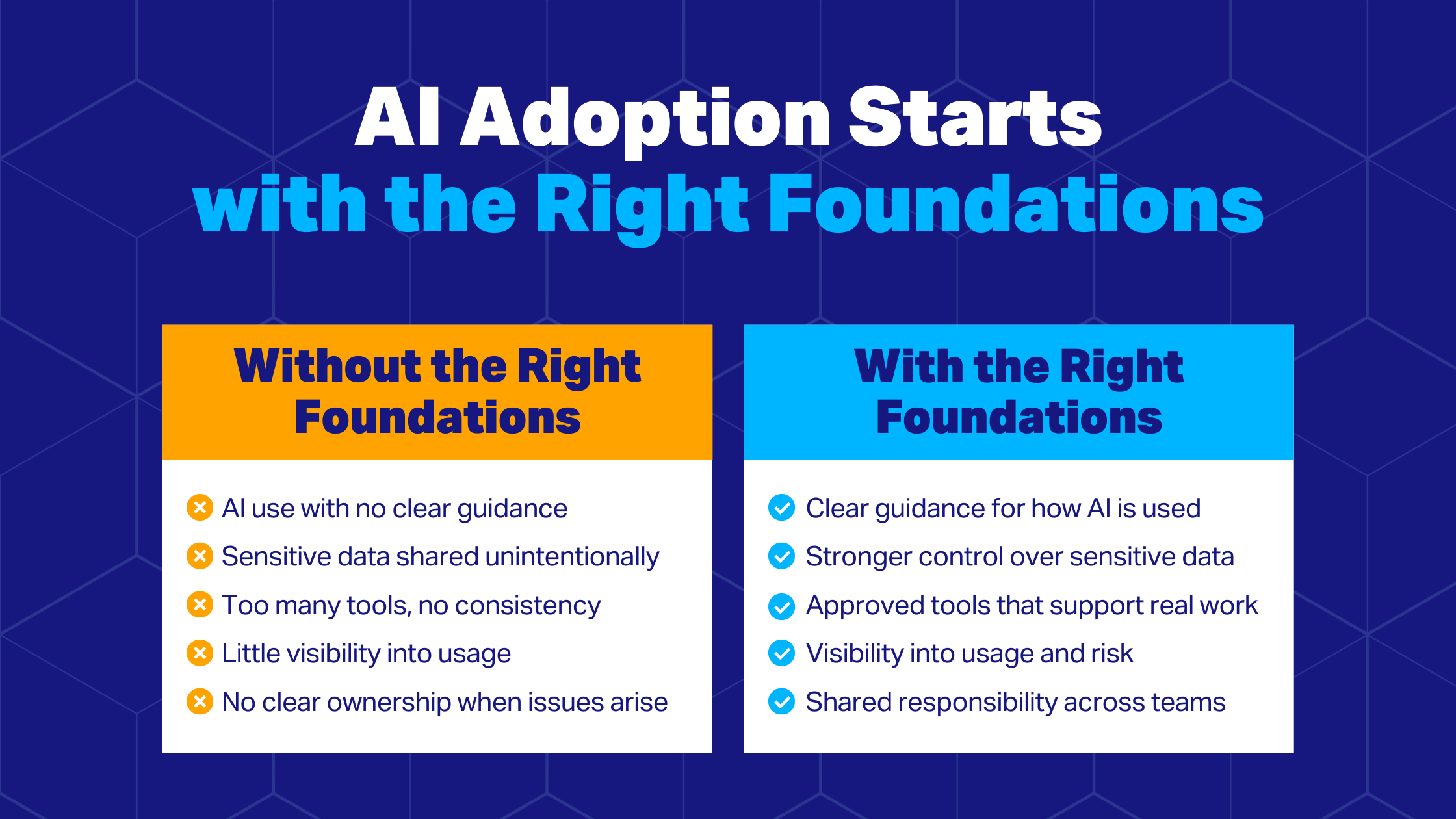

That tension is leading many companies down one of two paths: racing to adopt solutions and letting AI use spread with limited oversight, or hesitating while figuring out the right approach. Neither is an effective strategy.

"Some teams rush to roll out AI licences without any governance, controls, or monitoring," says Desirae Huot, Senior Modern Productivity Consultant at IX Solutions. "Suddenly, they start seeing exposed data, or people might start creating their own agents, and IT has no visibility into how people are using the technology."

AI systems are only as reliable as the data, governance, and security controls behind them. When those systems are locked in, AI investments can deliver meaningful ROI without compromising critical information or trust.

Where Organizations Get It Wrong

Businesses are embracing AI, yet many still lack a clear AI risk management strategy or usage policy. That lack of planning and structure usually leads to the same pitfalls as users start experimenting with software:

Data and Compliance Exposure

Teams often underestimate the risks when sensitive content and AI mix. "The biggest thing is that people are eager to start using AI without realizing the consequences of putting business information into external tools like ChatGPT," says Huot. "If you're inputting anything sensitive, you're essentially leaking that information."

That’s exactly what happened to Samsung in 2023 after a technician pasted proprietary source code into ChatGPT, which later resulted in a leak. Research suggests that incidents like this remain widespread: Accenture's 2025 State of Cybersecurity Resilience report found that 77% of organizations lack sufficient AI and security practices to defend their data. Only 7% say their data is AI-ready—meaning it's properly documented, labelled, cleaned, and access-controlled.

Risks compound when using third-party platforms. Companies might have little knowledge or control over how vendors handle their data, including where it’s stored or if it’s used to train models. Without enough oversight, companies risk both data loss and legal repercussions if their AI practices violate laws like GDPR or the EU AI Act.

AI Adoption Without Strategic Alignment

Enterprise AI is frequently driven by curiosity or competitive pressure rather than practical business outcomes. Decision-makers may approve solutions because they have innovative features or promise productivity gains. But without matching integrations with broader goals, businesses can end up with a patchwork of systems that don’t support real workflows.

That can create unnecessary complexity and costs. Teams experiment with new platforms, but few actually stick and improve day-to-day tasks. In some cases, this also fuels shadow AI: if employees feel that official tools aren’t helpful, they may seek alternatives on their own.

Shadow AI

Shadow AI, the unauthorized use of AI within an organization, is increasingly common. According to IBM, 79% of Canadian office workers use AI on the job. Among those users, nearly seven out of ten use unofficial AI applications.

This creates serious visibility challenges for IT and makes it harder to stop sensitive information from entering AI systems. A 2025 report from Reco suggests that some of the most popular unsanctioned applications also lack basic security features like encryption and multi-factor authentication.

Accountability Vacuums

It might seem logical to assign AI decision-making to a single department or individual, even creating a new role. However, successful AI adoption works best as a cross-functional effort. The technology touches multiple domains, including IT infrastructure, cybersecurity, data management, legal compliance, HR, and business strategy. When governance is treated as a siloed initiative, blind spots can quickly emerge.

Key Foundations for Scaling Enterprise AI

Strengthen Data Loss Prevention First

Before deploying new platforms, IT should start with an AI readiness assessment focusing on data loss prevention. Does staff copy or upload sensitive information into AI tools? Can IT monitor how data is used with AI? Are files encrypted? Is content being overshared internally?

Several technical safeguards can reduce risk and should be considered if your existing security posture is lacking:

Data encryption: Protect files at rest and in transit so information stays protected if it leaves secured systems.

Application blockers: Automatically prevent access to unapproved AI and steer users toward sanctioned options within secure environments.

Sensitivity-based AI restrictions: Classify content to prevent AI tools from accessing, analyzing, or processing sensitive files.

Access management: Use centralized access and data governance controls

to control, at a granular level, how information is shared and who has access.

Data retention systems: Monitor and manage how long information is stored and how outdated content is archived or deleted.

Device management: Control AI permissions on phones, laptops, and other devices.

Third-party platforms also deserve careful vetting. Huot suggests asking vendors how they’ll use your information (including whether it’ll train the model), what security infrastructure they have in place, and where your information may be stored. This is crucial from a compliance perspective, since providers may route your information through jurisdictions with different data residency or privacy rules.

Build Strong Data Governance

As the saying goes, “garbage in, garbage out.” Good data governance improves data quality for more accurate AI outputs and helps keep systems compliant. A strong approach involves:

Cataloguing data. Take an inventory of all your data, including structured and unstructured content, internal databases, and third-party sources. The goal is to know what the organization has and where it’s exposed or of low quality.

Classifying data. Data should be labeled according to risk and sensitivity level. This determines what AI systems are allowed to access and what information intersects with data privacy laws.

Improving data quality. AI systems perform best when inputs are accurate, complete, and consistent. You may need to remove duplicates, standardize formats, archive outdated records, and correct errors to make data AI-ready.

Enforcing access controls. Approaches like role-based access control (RBAC) ensure only authorized users handle sensitive content. Monitoring systems should also track usage, helping IT detect unusual or unauthorized behaviour.

Validate AI Use Cases

Rather than acquiring solutions simply to stay current, leaders should identify what the organization actually needs AI to do. One practical starting point is looking at how employees already use AI, even if it’s shadow AI. This can reveal where the technology will be most valuable and where productivity gaps exist.

Huot also recommends assessing workforce readiness when choosing tools and designing your AI roadmap, as this will inform the initiative’s pacing and user training. A 2025 study from KPMG found that four out of five Canadians remain concerned about risks like cybersecurity, privacy, and misinformation when using AI.

“We want to approach it from a crawl, walk, run perspective,” says Huot. “Once things are secured, you can pilot the tools with a small group of users until people are more comfortable. Then, you can scale the rollout organization-wide and look at more advanced use cases.”

Draft A Clear AI Policy

An effective AI acceptable use policy does a few things: it reduces risks like data leakage and IP loss, supports compliance with privacy and AI regulations, and creates the documentation trail you'll need to demonstrate accountability if something goes wrong.

If you’re not sure where to start, first define the company’s core values and mission, considering your risk tolerance and what you want to achieve with AI long-term. You can then turn these principles into a policy that answers questions like:

Which AI platforms are approved?

What tasks are users allowed to perform with AI?

What types of data can enter AI systems?

How can staff use AI critically to avoid risks like overreliance?

Who is accountable for misuse?

An effective AI governance framework integrates these policies into employee training and has regular refreshers to cover new software, risks, or policy changes. While lengthy documents are often needed for compliance purposes, you can also translate them into shorter, practical reference guides for everyday users.

Make AI Governance A Collective Responsibility

An AI governance framework isn’t just an IT project, but a company-wide effort. When planning for integration, involve stakeholders across IT, leadership, legal, HR, and other relevant departments. Each group can bring in a different perspective to surface risks and opportunities.

In practice, these conversations don't always go smoothly. For example, executives may pressure IT to move faster on AI adoption, or question why restrictions are necessary when employees are fully on board with tools like ChatGPT.

The key is to frame AI governance as a business risk management issue, not a purely technical one. "A lot of it is education," says Huot. "From an IT perspective, it's important to clearly communicate where the risks are. Leadership must recognize that AI guardrails enable rather than hinder performance. With robust governance, organizations can safely unlock deeper insights from internal data and achieve superior outcomes without compromising security."

An external advisor can be valuable here, too. While IT providers like IX Solutions offer current AI and security expertise, they can also move stakeholder conversations forward by aligning AI planning with broader business goals.

Success Starts With Strong AI Governance

The organizations seeing the greatest ROI with AI aren't necessarily the ones moving the fastest, but the ones that took the time to build the right foundations first. A thoughtful AI governance framework empowers businesses to stay innovative and strengthen productivity without jeopardizing data, compliance, or trust.

"Security is of the utmost importance," Huot says. "When you guide people to use AI securely, you get the best outcome: broad adoption with none of the avoidable risk."

IX Solutions collaborates with your team to evaluate your AI readiness, deliver a secure and compliant implementation, and develop adoption strategies that maximize value. If you're ready to build out your AI roadmap, get in touch with the IX Solutions team.